Google MediaPipe Hand Tracking Bodes Well For AR And Smartglasses

Google may have been less than consistent with its commitment to virtual and augmented reality products but, almost ironically, it has been developing the technologies that could advance those markets. Google has particularly been investing heavily in computer vision and machine learning that don't need to be offloaded to powerful servers running on the cloud. Its latest research project, if it truly takes off, could make hand and finger tracking as affordable as simply using a camera and a smartphone.

Many VR and AR systems rely on head tracking for positioning and orienting the user inside the digital world but that's pretty much all that it can do. When it comes to trying to reproduce normal hand movement and gestures in the virtual world, most systems require additional sensors, cameras, and equipment. Google Research's MediaPipe framework, in contrast, needs nothing more than a smartphone.

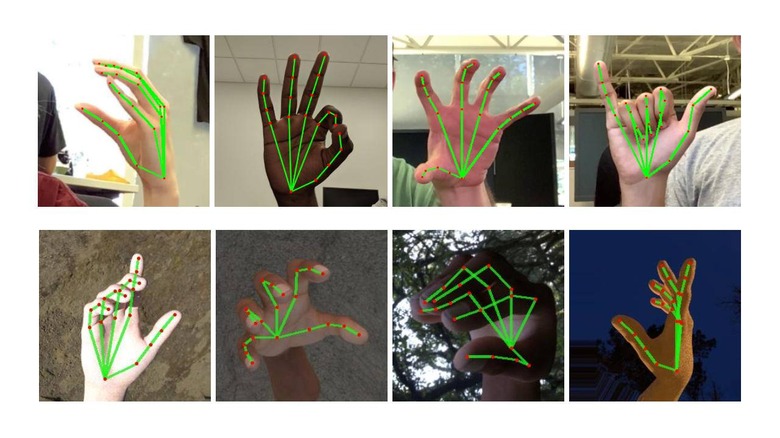

As with any Google magic act, the secret has to do with machine learning and AI. The researchers try to condense the process down to more understandable concepts but it all boils down to using computer vision to first detect and analyze the palm. Everything else, including the position of the fingers, is computed and predicted based on the initial bounding box for the hand. MediaPipe then proceeds to recognize gestures the 21 3D keypoints produced by the earlier processes.

What makes all of these even more impressive is the hardware required to do all that. The goal of MediaPipe is to provide that hand gesture recognition system in real-time and on-device, in this case, a smartphone. In addition to the privacy and performance implications, it also simplifies the requirements for having accurate hand recognition on any device.

The applications of such a framework can actually be quite staggering. Smartphones can implement hand gesture control without dedicated sensors like Project Soli. Smartglasses and XR headsets can use the same cameras and processors they already have anyway. All that's left is for Google to develops its true VR and AR ecosystem and stick to it for years to come.