Facebook data grows by over 500 TB daily

If you ever step back and really think about how much data massive online services such as social network Facebook and search engine Google generate and store during any given day, it's hard to fathom. All you need do is look at your own Facebook newsfeed to see the huge amount of data added constantly each and every day. That day includes things from status updates with simple text to large videos and photo files.

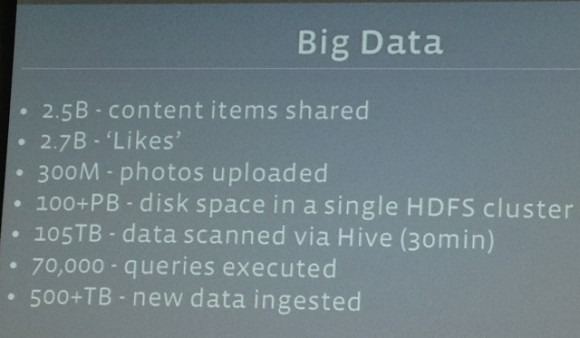

Facebook recently unveiled some statistics on the amount of data its system processes and stores. According to Facebook, its data system processes 2.5 million pieces of content each day amounting to 500+ terabytes of data daily. Facebook generates 2.7 billion Like actions per day and 300 million new photos are uploaded daily.

Breaking the data down a bit, Facebook says that it scans roughly 105 TB of data each half hour. While 500 TB is a lot of data, that's a mere drop in the bucket compared to the amount of data stored in a single Facebook Hadoop disk cluster. According to Facebook's VP of engineering, Jay Parikh, Facebook's Hadoop disk cluster has 100 petabytes of data. A single petabyte is 1,048,576 gigabytes.

That is simply staggering amount of data. Parikh says that Facebook believes it operates the single largest Hadoop cluster in the world. That certainly sounds accurate to me. That's more data than I can wrap my brain around.

[via TechCrunch]