Algorithm could remove obstructions from a photo

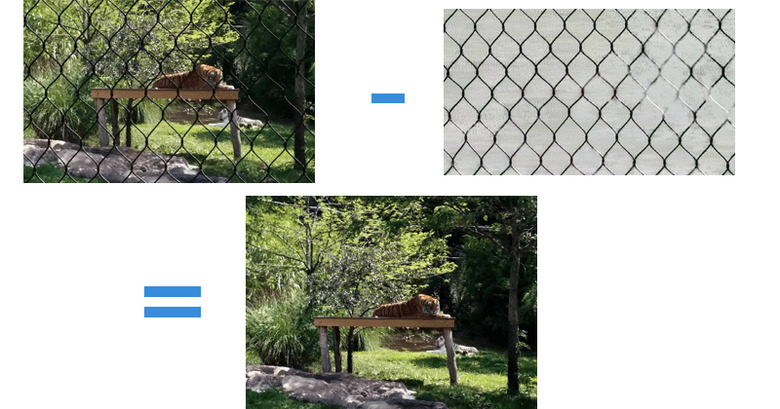

Anyone who has has tried to use digital camera will have, at one point or another, run into a case where an almost perfect, once in a lifetime shot is ruined by something as simple as a chain-link fence or reflections on a clear window. Although photo editing skills and tools have come a long way, they can only do so much. That, however, may soon be a thing of the past, thanks to a new algorithm from researchers at Google and MIT that aims to almost magically make these obstructions disappear.

Of course, it isn't really magic and does require some slight movement on the part of the user, like when taking a panoramic shot. In essence, what the algorithm does is compare what it thinks is the foreground, which is usually where the occluding object is, and the background, where the actual subject usually is. It then removes the foreground, leaving the background intact and clear. The algorithm uses the principle of parallax movement to perform its magic. Things closer to us seem to move faster than those far away. By analyzing which of the objects in a photo seems to be moving faster, the algorithm is able to accurately distinguish the foreground from the background.

It isn't the first or only time researchers have tried to solve the problem of occlusions in photographs but according to Google research scientist Michael Rubenstein, this algorithm is more multipurpose. That said, it isn't perfect. Due to its reliance on parallax, it can't properly fix a photo with a fast moving subject, like in a sports game. It also doesn't handle obstructions that also move themselves. And finally, it can only handle one obstruction at a time. So if you have a chain-link fence *and* a reflective glass at the same time, you're out of luck.

Still, for most basic use cases, the algorithm works well enough that Rubinstein says even Google might be interested in it, perhaps for a future feature in its Android camera app. For now, however, nothing concrete has been planned for it.