MedRef For Glass Adds Face-Recognition To Google's Wearable

If there's one thing people keep asking from Google Glass and other augmented reality headsets, it's facial-recognition to bypass those "who am I talking to again?" moments. The first implementation of something along those lines for Google's wearable has been revealed, MedRef for Glass, a hospital management app by NeatoCode Techniques which can attach patient photos to individual health records and then later recognize them based on face-matching.

Cooked up at a medical hackathon, the app is still in its early stages, though it does show how a wearable computer like Glass could be integrated into a doctor or nurse's workflow. MedRef allows the wearer to make verbal notes and then recall them, as well as add photos of the patient and other documents to their records, all without using your hands.

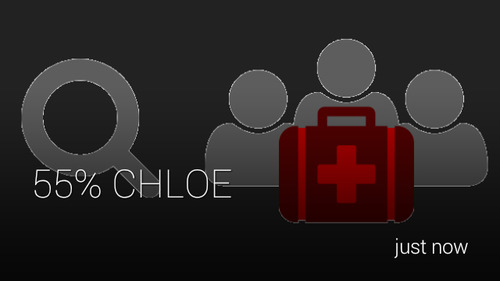

However, it's the facial-recognition which is arguably the most interesting part of the app. All the processing is done in the cloud, with the current demo using the Betaface API: first, Glass is loaded up with photos of the patient, and then a new photo is compared to the "facial ID" those source shots produce with the matching tech giving a percentage likelihood of it being the same person.

MedRef for Glass video demo:

There are still some rough edges to be worked on, admittedly. In the demo above, for instance, even with just two individuals known to Glass, the face-recognition system can only give a 55-percent probability that it has matched a person. Any commercial implementation would also need to be able to see past bruising or surgery scars, which could be commonplace in a hospital, and evolving over the course of a patient's stay.

Currently, the MedRef app data is limited to a single Glass. Far more useful, though, would be the planned group access, which would allow, say, multiple surgery staff or a group of doctors to more readily find notes for patients they might not have previously seen. Meanwhile, the form-factor of Glass would leave both hands free, something we've seen other wearables companies attempt, such as the HC1 running Paramedic Pro.

Nonetheless it's an ambitious concept, and one which could come on in leaps and bounds as cloud-processing of face-matching gets more capable. "In the future," NeatoCode suggests, "on more powerful hardware and APIs, facial recognition could even be written to run all the time." That could mean an end to awkward moments at conferences and parties where someone remembers your name but you can't recall theirs.

There's more detail at the MedRef project page, and the code has been released as an open-source project on GitHub.

SOURCE: SelfScreens