Making VR Mainstream: SMI's Eye-Tracking Magic Hands-On

Eye-tracking tech could finally go mainstream with head-mounted displays like Oculus Rift and Sony Project Morpheus, with pupil spotting specialist SMI readying new consumer-level hardware for gaming, VR, and social networking. SensoMotoric Instruments may not be a household name, but it might be one you end up silently thanking if you've ever gotten motion-sick from using a virtual reality headset. I caught up with the company to find out how understanding eyes may be more important – and closer to the market – than you think.

SMI isn't new to eye-tracking. In fact, the company has been in the eye research business for 22 years, SMI director Christian Villwock told me; 9 out of 10 of the Lasik eye-surgery machines use SMI eye-tracking components, up until the point that the company sold off that division around eighteen months ago.

There was even a homegrown VR headset, though it was axed in the early 2000s. "We were far too early," Villwock admits.

About three years ago, SMI decided the time was right for another attempt. Villwock took charge of a new team exploring alternative OEM uses for the company's eye-tracking technology, taking components more commonly included in research headsets used by psychologists and UI designers, and repurposing them for other fields. There have already been a few wins, too, including alternative input methods for those who can only move their eyes, as well as in new lie-detection systems from one of the big names in polygraph machines.

Meanwhile, interest in head-mounted displays and the use of eye-tracking in gaming was rising, driven by names like Oculus. Villock showed me two demonstrations of how manufacturers – whom he wouldn't name – will be using SMI's system in the very near future.

The first is a sensor bar dubbed RED-N, a broad, narrow rod with a USB 3.0 socket on one end, and which contains IR light emitters and a camera. It's a reference design – SMI expects customers to integrate it into the lower bezel of laptop and desktop displays – but it's fully functional: after you calibrate it, by looking at two or more points on the screen in sequence, the bar can track wherever your gaze falls.

It's surprisingly accurate, too: actually, Sony used exactly this technology to power the eye-controlled inFAMOUS: Second Son demo at GDC 2014 earlier this year. SMI doesn't have a copy of the PlayStation title to demo, but came up with its own game instead. Your eyes direct the on-screen character and target items, relegating the control pad to simply moving forward and backward, as well as firing.

I'm not much of a gamer, but it's fairly intuitive after a minute or two to rely on your eyes rather than a joystick. The SMI system can recognize eyes up to around 40-95cm from the display, and will work on screens of up to 28-inches in size; the camera is around 6mm thick, so should fit into most bezels. Integrated in bulk, Villock suggests it could add just $2 onto the bill-of-materials for a notebook, and says several manufacturers have already expressed interest.

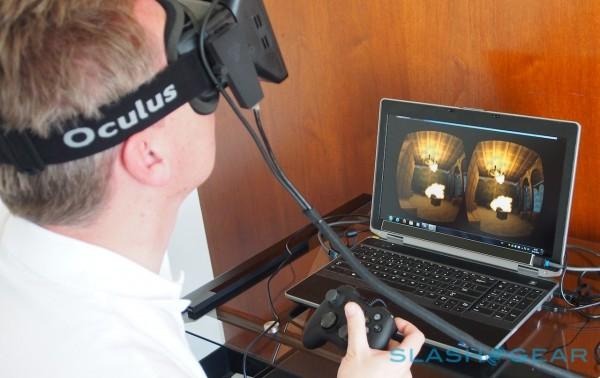

SMI's second demonstration is arguably more impressive, not to mention potentially having a bigger impact on VR in general. Using a modified Oculus Rift developer kit – Villock was at pains to point out that it had simply been bought off-the-shelf, and wasn't an indication that SMI was actually working with Oculus – the team added in eye-tracking support alongside the existing head-tracking. In the first-gen prototype, that required grinding away some of the lenses to fit in the cameras above the IR emitters; you don't notice it when you're wearing the headset, though Villock says SMI has already cooked up a new design that avoids the modification altogether.

Like the first demo, the Oculus integration means you can not only turn your head to explore a virtual environment, but simply look at objects to target them. I was able to shoot at crates, sending them bouncing into the air, and then glance up to re-target them and keep them aloft, all without moving my head. It required no calibration, though by optionally getting the wearer to focus briefly on a dot in the center of the display, SMI can achieve roughly half-degree accuracy.

The more important advantage you might not even realize, however, at least until you take off your HMD and find you're not feeling nauseous. Like a lot of people, I can find myself getting queasy after using virtual reality goggles for a while, like a kind of virtual-motion sickness. That, Villock explained to me, is often because head-mounted displays aren't taking into account interpupillary distance.

VR glasses like Rift work by showing you two 2D images – one for each eye, each with a slightly offset perspective of the digital scene – which are then combined by the brain into a single, 3D view. Problem is, the distance between the pupils of each user is different. If you imagine a center line running down through your head, the central axis around which it moves when you look around, if the interpupillary distance isn't right then that axis gets moved.

That leads to nausea, because the brain doesn't see the movement it expects to. By tracking the exact position of the eyes, however, SMI's tech can negate the problem, telling whatever software is running how to best offset each of the 2D pictures to suit the wearer.

I didn't have enough time to test that out conclusively, but SMI is clearly making a compelling case to HMD designers. Villock expects the technology to be in at least one first-generation consumer head-mounted display launch – he wouldn't confirm which – and says he's in talks with most of the names about what SMI is doing. There'll be an SDK for developers to integrate eye-detection and interpupillary distance measurement into their software, too, and hardware costs are expected to be in line with the RED-N bar, at just a couple of dollars.

It's not just gaming, though, where SMI sees potential. With Facebook buying Oculus, attention has turned to the possibility of virtual reality social networking, particularly with Facebook's engineering VP Cory Ondrejka being the co-founder of Second Life.

Eye-tracking could be vital there, Villock argues, because it's such an essential aspect of how we interact with each other. If Facebook does indeed create some sort of virtual arena in which members can chat, collaborate, and play, then their being able to look directly at each other – gazes meeting over a crowded simulation – will add considerably to how realistic it all feels.

Villock also sees potential in virtual video conferencing – being able to better engage with avatars of remote callers – as well as in browsing virtual stores and in sports training.

The HMD industry is still hunting for its "killer use-case" Villock says, and SMI is betting on eye-tracking being the key to unlocking that. I'm still not entirely convinced that I'll be doing my socializing virtually any time soon, but cutting the VR sickness would be a huge advantage, and remove one of the lingering barriers to mainstream adoption.