Google Glass augmented reality gets real-time demo

We already saw augmented reality on Google Glass last month as developer Brandyn White created an augmented reality UI that uses Mirror API to display information over still images. Now White and fellow OpenGlass developer Andrew Miller have now been able to demonstrate AR in real-time. This opens the door for displaying useful info over what you see immediately in front of you, as you see it, like restaurant ratings, product reviews, and more.

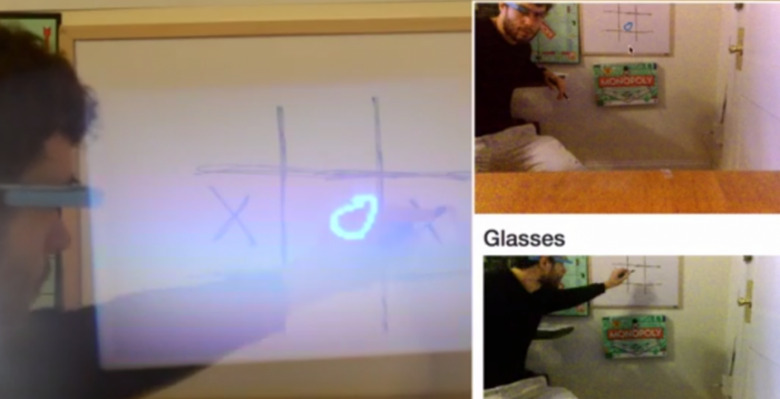

It works by taking images with a video output camera, modifying that image based on your input and specifications, then displaying it on Glass with Mirror API. OpenGlass is hopeful they can stay within the Google ToS since they're using Mirror API. So, you could feasibly play tic-tac-toe with a friend who is on the other side of the world, while you both look at a game board that is physically in front of you.

Yes, there is a four second image processing delay at this point, but that's largely due to server and Google Glass delays. Tests done on a local server already reduced processing time to three seconds.

Those interested can access the OpenGlass open-source library at Github for Glass Explorers right now. White and Miler previously made a Google Glass app that identifies objects for those with visual impairments. Time will tell what further advancements will be made in augmented reality but these initial demos point to further expansion and integration, especially if this wearable goes mainstream.

VIA SelfScreens