Facebook psych experiment explained, Andreessen chimes in

Facebook is, unsurprisingly, embroiled in yet another scandal. Surprisingly, it isn't directly related to privacy but comes quite close. The social networking giant has been revealed to have manipulated their news feed ever so slightly in order to see the effects on the moods of its users. Sounds almost harmless until you learn that the findings were recently published in a Proceedings of the National Academy of Sciences (PNAS) paper.

To be fair, the experiment, if you could call it that, took place in 2012, at least according to Facebook, and would have probably never been found out if the paper had never been published. Of course, that brings up a whole different set of questions and problems. Nonetheless, according to Adam Kramer, one of the Facebook researchers who co-authored the paper, the news feed manipulation only took place for one week in early 2012 and only affected 0.04 percent, or 1 in 2,500, of Facebook's users. The reason why this experiment was conduct was, according to Kramer, because Facebook cared about the emotional impact of the service on its users.

According to cited conventional wisdom, a greater proportion of negative posts would negatively impact the mood of readers, who might decide to cut back on using Facebook or even quit entirely. On the other hand, if the news feeds were mostly positive, users might find themselves feeling left out of all the fun in the world and would likewise be depressed. In order to test that theory, Facebook proceeded to deprioritize some of the content in users' news feeds. Kramer insists that no posts were deleted and they could still be viewed on friends' timelines. They just wouldn't rise up to the top of the stack. The results were somewhat a bit inclusive as the impact was too minimal to statistically draw a conclusion.

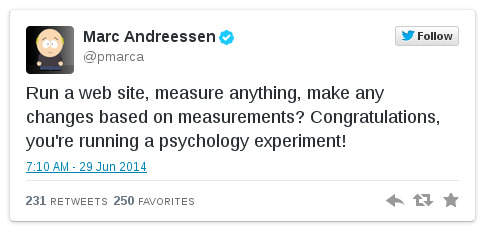

Naturally, this revelation caused not a small amount of controversy and ill-feelings, but Facebook isn't without its defenders. Marc Andreessen, famed co-founder of Netscape and now Facebook investor, took to Twitter to imply how the whole situation is being blown out of proportion.

And again, to be fair, Facebook's Data Use Policy (which we all read in full, right?) does have a clause about using information they receive for research. The thing is that this kind of research falls under "Internal operations". When Facebook's researchers published a paper based on it, it entered a slightly different territory. Some are calling on Facebook to adhere to scientific ethic standards if it wishes to keep on doing this kind activity, a standard that pushes researchers to inform test subjects about the experiment and gives them the option to opt out. Kramer himself apologizes for the rush of emotions that it caused (which could be another covert Facebook psych experiment!) and admits that benefits of the paper, however small, might not have justified the means. He also claims that Facebook is improving its internal review practices to address these concerns, but some might say that the damage has already been done.

One thing to take away from all of these is the emotional influence that Facebook, the service and our contacts within it, has in our lives, at least for those of us still glued to it. There is no question that what we read in our news feeds and timelines will affect our own emotions, but what is really important is how we react to and act on them. This "scandal" makes the video below all the more relevant and meaningful.

SOURCE: @Adam Kramer, @Marc Andreessen

VIA: Engadget, VentureBeat