Augmented reality is the future our hands are not yet ready for

While virtual reality or VR continues to ramp up the hype, a slightly related technology is also starting to rev up its engines. Augmented reality is that other movement that is trying to bring technology closer to our eyes, quite literally too. But unlike VR, AR has a significantly more ambitious goal. Or rather, it is what proponents like Microsoft and Meta are trying to shape it into. Augmented reality could effectively revolutionize how we do computing in the future, replacing monitors and some forms of input with more "natural" counterparts. But while that might be easy for our eyes to take in, our hands might have a harder time adjusting.

The dream

Ever fantasized or wished you had as many monitors as possible to fit all your work and your life into? According to Meta, that will be possible with AR and indeed their prototype, only at its 2nd major iteration, shows a lot of promise already. Replacing even that one single monitor can be both economical and productive. It can also be more private and secure, since only you can see what you're looking at. Unless you choose otherwise, of course. Having a near infinite space for all your windows and objects, really limited only by your own physical area and your computer's resources, is something tech savvy and computer-dependent users can only dream about. And AR is close to making that dream come true.

And it isn't just about flat, two-dimensional windows either. Any computer generated object, 2D or 3D, is fair game. With the whole, three-dimensional world as the backdrop, you aren't really limited to what you can see. Your hands, on the other hand, might be a different story.

The reality

Although there is always room for improvement, like a wider field of view, the like of the HoloLens, the Meta 2, and the Magic Leap, may have the visual part of Augmented Reality mastered. Accurately, more or less, overlaying computer generated content on top of real world objects have been made easier by depth sensing cameras. But that's only half the AR equation. Even more than virtual reality, AR is heavily dependent on the input method as well. And when the AR dream is concerned, that input ideally should be our bare, naked, and ungloved hands. And that is, perhaps, the biggest challenge for AR right now.

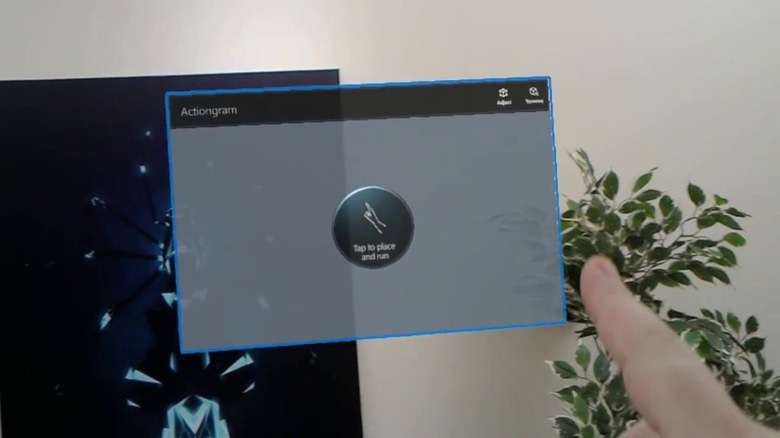

Different companies are approaching the problem in different ways. Microsoft, at least for now, seems to be going for a simpler but less natural solution of "clickers" and simple air tapping gestures. The likes of Magic Leap and Meta aim for an interaction method that makes you manipulate virtual objects the same way you would if they were physical ones, tapping at edges to sending windows away, grabbing objects by their ends to pull them apart, and the like.

While already problematic from a technology point of view, it is even more from a human-computer interaction (HCI) perspective. It boils down to the same problem that plagues smartphone touch screen users: haptic feedback. Our hands, specifically our fingers, aren't just pointers like a mouse cursor. They can feel, produce, and differentiate varying levels of pressure, a fact that Apple has tried to exploit wit its 3D Touch technology. HCI experts and, amusingly, gamers have bemoaned how touch screens deprive our fingers of the much needed haptic feedback that keypads and gamepads afforded. With AR, it is even worse. There is literally nothing to touch, not even a touch screen.

In effect, interaction will be completely reliant on our eyes. Our fingers, while freed to move as they please and more naturally than if holding a mouse or a controller, will really be more like a glorified 10-pointer mouse. Where as we rely on our fingers for feedback that we actually did or at least touched something, even on a touch screen, in AR we have trust the judgment of the computer that we did do something and our eyes for visual feedback. And we know how our eyes can be easily tricked. And that's not even taking into account accuracy and precision, where haptic feedback plays a greater role in the absence of more precise instruments than our fingers. If accurately navigating a touch screen was already difficult, imagine poking at thin air.

The future

The good news is that this situation represents only current technology and current human behavior. It speaks nothing of what's possible in the future, whether near or distant. Problems of precision and accuracy are partly due to the current user interfaces that aren't even fit for our stubby fingers. It might be fun to have all your regular app windows spread around you, but trying to work with them might be less than entertaining. Fortunately, that's a software problem that's easier to address than, say, haptic feedback.

That one is trickier, especially if you stick to the no-gloves requirement. The solution, however, might actually lie in the human mind. The brain is an amazing organ that, over time, can adapt to the constraints given to them. Today, we can observe people who are just as dexterous and agile as the previous generation was with their physical buttons and keypads. While some are still missing the haptic feedback to distinguish between certain "boundaries" in buttons, people have learned to make do. Perhaps in that AR future, our eyes, our hands, and our brains would have adapted to the point to make these problems moot. Those might bring a whole different set of problems for the human mind and body, but at least it won't be a problem for AR.

Closing Thoughts

Augmented reality is amazing, perhaps even more the VR, full of potential, and incredibly young. The dream of mixed reality has existed for years, even decades, but it is only know that technology has matured enough to make it even minimally possible. There is still a lot of work ahead for pioneers, which is why all devices today are sold as very non-final, not to mention expensive, development kits. The dream and the marketing would like us to believe we can all be like Tony Stark in his basement lab, but put that headset on any one outside the development or marketing team and you're bound to see those finger problems. Augmented reality could very well be the future of computing, but, despite the progress and the hype, we are still far, far away from that future.